The ALIGNER Fundamental Rights Impact Assessment

Artificial intelligence can incredibly enhance law enforcement agencies’ capabilities to prevent, investigate, detect and prosecute crimes, as well as to predict and anticipate them. However, despite the numerous promised benefits, the use of AI systems in the law enforcement domain raises numerous ethical and legal concerns.

On behalf of Project ALIGNER, KU Leuven Centre for IT & IP Law (CiTiP) and CBRNE Ltd have jointly developed the ALIGNER Fundamental Rights Impact Assessment (AFRIA), a tool addressed to LEAs who aim to deploy AI systems for law enforcement purposes within the EU. The AFRIA is a reflective exercise, seeking to further enhance LEAs’ already existing legal and ethical governance systems, by assisting them in building and demonstrating compliance with ethical principles and fundamental rights while deploying AI systems.

The AFRIA consists of two connected and complementary templates:

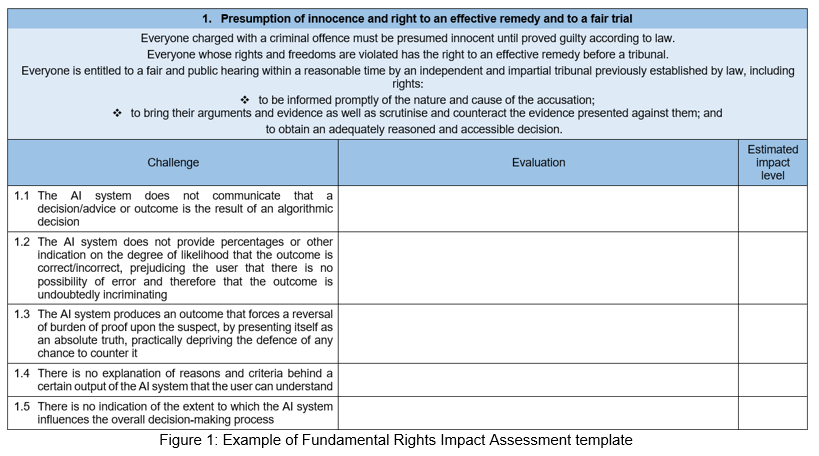

- The Fundamental Rights Impact Assessment template, which helps LEAs identify and assess the impact of their AI systems on those fundamental rights most likely to be infringed; and

- The AI System Governance template, which helps LEAs identify the relevant ethical standards for trustworthy AI and mitigate the impact on fundamental rights.”

You can find out more about the tool here. Requests for information should be directed to Donatella Casaburo donatella.casaburo@kuleuven.be or Irina Marsh irina.marsh@cbrneltd.com